batch normlization

see Sergey Ioffe and Christian Szegedy, "Batch Normalization: Accelerating Deep Network Training by Reducing

Internal Covariate Shift", ICML 2015.

首先完成前向过程:

def batchnorm_forward(x, gamma, beta, bn_param):

"""

Forward pass for batch normalization.

During training the sample mean and (uncorrected) sample variance are

computed from minibatch statistics and used to normalize the incoming data.

During training we also keep an exponentially decaying running mean of the

mean and variance of each feature, and these averages are used to normalize

data at test-time.

At each timestep we update the running averages for mean and variance using

an exponential decay based on the momentum parameter:

running_mean = momentum * running_mean + (1 - momentum) * sample_mean

running_var = momentum * running_var + (1 - momentum) * sample_var

Note that the batch normalization paper suggests a different test-time

behavior: they compute sample mean and variance for each feature using a

large number of training images rather than using a running average. For

this implementation we have chosen to use running averages instead since

they do not require an additional estimation step; the torch7

implementation of batch normalization also uses running averages.

Input:

- x: Data of shape (N, D)

- gamma: Scale parameter of shape (D,)

- beta: Shift paremeter of shape (D,)

- bn_param: Dictionary with the following keys:

- mode: 'train' or 'test'; required

- eps: Constant for numeric stability

- momentum: Constant for running mean / variance.

- running_mean: Array of shape (D,) giving running mean of features

- running_var Array of shape (D,) giving running variance of features

Returns a tuple of:

- out: of shape (N, D)

- cache: A tuple of values needed in the backward pass

"""

mode = bn_param['mode']

eps = bn_param.get('eps', 1e-5)

momentum = bn_param.get('momentum', 0.9)

N, D = x.shape

running_mean = bn_param.get('running_mean', np.zeros(D, dtype=x.dtype))

running_var = bn_param.get('running_var', np.zeros(D, dtype=x.dtype))

out, cache = None, None

if mode == 'train':

#######################################################################

# TODO: Implement the training-time forward pass for batch norm. #

# Use minibatch statistics to compute the mean and variance, use #

# these statistics to normalize the incoming data, and scale and #

# shift the normalized data using gamma and beta. #

# #

# You should store the output in the variable out. Any intermediates #

# that you need for the backward pass should be stored in the cache #

# variable. #

# #

# You should also use your computed sample mean and variance together #

# with the momentum variable to update the running mean and running #

# variance, storing your result in the running_mean and running_var #

# variables. #

# #

# Note that though you should be keeping track of the running #

# variance, you should normalize the data based on the standard #

# deviation (square root of variance) instead! #

# Referencing the original paper (https://arxiv.org/abs/1502.03167) #

# might prove to be helpful. #

#######################################################################

sample_mean = np.mean(x,axis = 0) #矩阵x每一列的平均值(D,)

sample_var = np.var(x,axis = 0) #矩阵x每一列的方差(D,)

x_hat = (x - sample_mean)/(np.sqrt(sample_var + eps)) #标准化,eps:防止除数为0而增加的一个很小的正数

out = gamma * x_hat + beta #gamma放缩系数,beta偏移常量

cache = (x,sample_mean,sample_var,x_hat,eps,gamma,beta)

running_mean = momentum * running_mean + (1 - momentum) * sample_mean #基于动量的参数衰减

running_var = momentum * running_var + (1 - momentum) * sample_var

#######################################################################

# END OF YOUR CODE #

#######################################################################

elif mode == 'test':

#######################################################################

# TODO: Implement the test-time forward pass for batch normalization. #

# Use the running mean and variance to normalize the incoming data, #

# then scale and shift the normalized data using gamma and beta. #

# Store the result in the out variable. #

#######################################################################

out = (x - running_mean) * gamma / (np.sqrt(running_var + eps)) + beta

#######################################################################

# END OF YOUR CODE #

#######################################################################

else:

raise ValueError('Invalid forward batchnorm mode "%s"' % mode)

# Store the updated running means back into bn_param

bn_param['running_mean'] = running_mean

bn_param['running_var'] = running_var

return out, cache

在训练阶段检查:

Before batch normalization:

means: [ -2.3814598 -13.18038246 1.91780462]

stds: [27.18502186 34.21455511 37.68611762]

After batch normalization (gamma=1, beta=0)

means: [5.32907052e-17 7.04991621e-17 1.85962357e-17]

stds: [0.99999999 1. 1. ]

After batch normalization (gamma= [1. 2. 3.] , beta= [11. 12. 13.] )

means: [11. 12. 13.]

stds: [0.99999999 1.99999999 2.99999999]

发现means接近于beta(偏移),stds接近于gamma(标准化).

在测试阶段检查:

After batch normalization (test-time):

means: [-0.03927354 -0.04349152 -0.10452688]

stds: [1.01531428 1.01238373 0.97819988]

仍然是means接近于1,stds接近于0,但比训练阶段的差别更大。

完成后向过程:

def batchnorm_backward(dout, cache):

"""

Backward pass for batch normalization.

For this implementation, you should write out a computation graph for

batch normalization on paper and propagate gradients backward through

intermediate nodes.

Inputs:

- dout: Upstream derivatives, of shape (N, D)

- cache: Variable of intermediates from batchnorm_forward.

Returns a tuple of:

- dx: Gradient with respect to inputs x, of shape (N, D)

- dgamma: Gradient with respect to scale parameter gamma, of shape (D,)

- dbeta: Gradient with respect to shift parameter beta, of shape (D,)

"""

dx, dgamma, dbeta = None, None, None

###########################################################################

# TODO: Implement the backward pass for batch normalization. Store the #

# results in the dx, dgamma, and dbeta variables. #

# Referencing the original paper (https://arxiv.org/abs/1502.03167) #

# might prove to be helpful. #

###########################################################################

#这部分就是利用公式计算梯度

x,mean,var,x_hat,eps,gamma,beta = cache

N = x.shape[0]

dgamma = np.sum(dout * x_hat,axis = 0)

dbeta = np.sum(dout * 1.0,axis = 0)

dx_hat = dout * gamma

dx_hat_numerator = dx_hat / np.sqrt(var + eps)

dx_hat_denominator = np.sum(dx_hat * (x - mean),axis = 0)

dx_1 = dx_hat_numerator

dvar = -0.5 * ((var + eps) ** (-1.5)) * dx_hat_denominator

dmean = -1.0 * np.sum(dx_hat_numerator,axis = 0) + dvar * np.mean(-2.0 * (x - mean),axis = 0)

dx_var = dvar * 2.0 / N * (x - mean)

dx_mean = dmean * 1.0 / N

dx = dx_1 + dx_var + dx_mean

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx, dgamma, dbeta

dx error: 1.7029261167605239e-09

dgamma error: 7.420414216247087e-13

dbeta error: 2.8795057655839487e-12

使用论文中推导的公式来计算梯度:

def batchnorm_backward_alt(dout, cache):

"""

Alternative backward pass for batch normalization.

For this implementation you should work out the derivatives for the batch

normalizaton backward pass on paper and simplify as much as possible. You

should be able to derive a simple expression for the backward pass.

See the jupyter notebook for more hints.

Note: This implementation should expect to receive the same cache variable

as batchnorm_backward, but might not use all of the values in the cache.

Inputs / outputs: Same as batchnorm_backward

"""

dx, dgamma, dbeta = None, None, None

###########################################################################

# TODO: Implement the backward pass for batch normalization. Store the #

# results in the dx, dgamma, and dbeta variables. #

# #

# After computing the gradient with respect to the centered inputs, you #

# should be able to compute gradients with respect to the inputs in a #

# single statement; our implementation fits on a single 80-character line.#

###########################################################################

#使用论文中推导的公式计算

x,mean,var,x_hat,eps,gamma,beta = cache

N = x.shape[0]

dbeta = np.sum(dout,axis = 0)

dgamma = np.sum(x_hat * dout,axis = 0)

dx_norm = dout * gamma

dv = ((x - mean) * -0.5 * (var + eps)**-1.5 * dx_norm).sum(axis = 0)

dm = (dx_norm * -1 * (var + eps)** -0.5).sum(axis = 0) + (dv * (x - mean) * -2 / N).sum(axis = 0)

dx = dx_norm / (var + eps) ** 0.5 + dv * 2 * (x - mean) / N + dm / N

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx, dgamma, dbeta

dx difference: 1.2266724949639026e-12

dgamma difference: 0.0

dbeta difference: 0.0

speedup: 0.83x

然而结果很尴尬,还不如不加速呢。。

测试有batchnorm的全连接网络:

多层的全连接网络:

class FullyConnectedNet(object):

"""

A fully-connected neural network with an arbitrary number of hidden layers,

ReLU nonlinearities, and a softmax loss function. This will also implement

dropout and batch/layer normalization as options. For a network with L layers,

the architecture will be

{affine - [batch/layer norm] - relu - [dropout]} x (L - 1) - affine - softmax

where batch/layer normalization and dropout are optional, and the {...} block is

repeated L - 1 times.

Similar to the TwoLayerNet above, learnable parameters are stored in the

self.params dictionary and will be learned using the Solver class.

"""

def __init__(self, hidden_dims, input_dim=3*32*32, num_classes=10,

dropout=1, normalization=None, reg=0.0,

weight_scale=1e-2, dtype=np.float32, seed=None):

"""

Initialize a new FullyConnectedNet.

Inputs:

- hidden_dims: A list of integers giving the size of each hidden layer.

- input_dim: An integer giving the size of the input.

- num_classes: An integer giving the number of classes to classify.

- dropout: Scalar between 0 and 1 giving dropout strength. If dropout=1 then

the network should not use dropout at all.

- normalization: What type of normalization the network should use. Valid values

are "batchnorm", "layernorm", or None for no normalization (the default).

- reg: Scalar giving L2 regularization strength.

- weight_scale: Scalar giving the standard deviation for random

initialization of the weights.

- dtype: A numpy datatype object; all computations will be performed using

this datatype. float32 is faster but less accurate, so you should use

float64 for numeric gradient checking.

- seed: If not None, then pass this random seed to the dropout layers. This

will make the dropout layers deteriminstic so we can gradient check the

model. 默认无随机种子,若有会传递给dropout层。

"""

self.normalization = normalization

self.use_dropout = dropout != 1

self.reg = reg

self.num_layers = 1 + len(hidden_dims)

self.dtype = dtype

self.params = {}

############################################################################

# TODO: Initialize the parameters of the network, storing all values in #

# the self.params dictionary. Store weights and biases for the first layer #

# in W1 and b1; for the second layer use W2 and b2, etc. Weights should be #

# initialized from a normal distribution centered at 0 with standard #

# deviation equal to weight_scale. Biases should be initialized to zero. #

# #

# When using batch normalization, store scale and shift parameters for the #

# first layer in gamma1 and beta1; for the second layer use gamma2 and #

# beta2, etc. Scale parameters should be initialized to ones and shift #

# parameters should be initialized to zeros. #

############################################################################

#初始化所有隐藏层的参数

in_dim = input_dim #D

for i,h_dim in enumerate(hidden_dims): #(0,H1)(1,H2)

self.params['W%d' %(i+1,)] = weight_scale * np.random.randn(in_dim,h_dim)

self.params['b%d' %(i+1,)] = np.zeros((h_dim,))

if self.normalization=='batchnorm':

self.params['gamma%d' %(i+1,)] = np.ones((h_dim,)) #初始化为1

self.params['beta%d' %(i+1,)] = np.zeros((h_dim,)) #初始化为0

in_dim = h_dim #将该层的列数传递给下一层的行数

#初始化所有输出层的参数

self.params['W%d' %(self.num_layers,)] = weight_scale * np.random.randn(in_dim,num_classes)

self.params['b%d' %(self.num_layers,)] = np.zeros((num_classes,))

############################################################################

# END OF YOUR CODE #

############################################################################

# 当开启 dropout 时,我们需要在每一个神经元层中传递一个相同的 dropout 参数字典 self.dropout_param ,以保证每一层的神经元们 都知晓失活概率p和当前神经网络的模式状态mode(训练/测试)。

self.dropout_param = {} #dropout的参数字典

if self.use_dropout:

self.dropout_param = {'mode': 'train', 'p': dropout}

if seed is not None:

self.dropout_param['seed'] = seed

# 当开启批量归一化时,我们要定义一个BN算法的参数列表 self.bn_params , 以用来跟踪记录每一层的平均值和标准差。其中,第0个元素 self.bn_params[0] 表示前向传播第1个BN层的参数,第1个元素 self.bn_params[1] 表示前向传播 第2个BN层的参数,以此类推。

self.bn_params = [] #BN的参数字典

if self.normalization=='batchnorm':

self.bn_params = [{'mode': 'train'} for i in range(self.num_layers - 1)]

if self.normalization=='layernorm':

self.bn_params = [{} for i in range(self.num_layers - 1)]

# Cast all parameters to the correct datatype

for k, v in self.params.items():

self.params[k] = v.astype(dtype)

def loss(self, X, y=None):

"""

Compute loss and gradient for the fully-connected net.

Input / output: Same as TwoLayerNet above.

"""

X = X.astype(self.dtype)

mode = 'test' if y is None else 'train'

# Set train/test mode for batchnorm params and dropout param since they

# behave differently during training and testing.

if self.use_dropout:

self.dropout_param['mode'] = mode

if self.normalization=='batchnorm':

for bn_param in self.bn_params:

bn_param['mode'] = mode

scores = None

############################################################################

# TODO: Implement the forward pass for the fully-connected net, computing #

# the class scores for X and storing them in the scores variable. #

# #

# When using dropout, you'll need to pass self.dropout_param to each #

# dropout forward pass. #

# #

# When using batch normalization, you'll need to pass self.bn_params[0] to #

# the forward pass for the first batch normalization layer, pass #

# self.bn_params[1] to the forward pass for the second batch normalization #

# layer, etc. #

############################################################################

fc_mix_cache = {} # # 初始化每层前向传播的缓冲字典

if self.use_dropout: # 如果开启了dropout,初始化其对应的缓冲字典

dp_cache = {}

# 从第一个隐藏层开始循环每一个隐藏层,传递数据out,保存每一层的缓冲cache

out = X

for i in range(self.num_layers - 1): # 在每个hidden层中循环

w,b = self.params['W%d' %(i+1,)],self.params['b%d' %(i+1,)]

if self.normalization == 'batchnorm':

gamma = self.params['gamma%d' %(i+1,)]

beta = self.params['beta%d' %(i+1,)]

out,fc_mix_cache[i] = affine_bn_relu_forward(out,w,b,gamma,beta,self.bn_params[i])

else:

out,fc_mix_cache[i] = affine_relu_forward(out,w,b)

if self.use_dropout:

out,dp_cache[i] = dropout_forward(out,self.dropout_param)

#最后的输出层

w = self.params['W%d' %(self.num_layers,)]

b = self.params['b%d' %(self.num_layers,)]

out,out_cache = affine_forward(out,w,b)

scores = out

############################################################################

# END OF YOUR CODE #

############################################################################

# If test mode return early

if mode == 'test':

return scores

loss, grads = 0.0, {}

############################################################################

# TODO: Implement the backward pass for the fully-connected net. Store the #

# loss in the loss variable and gradients in the grads dictionary. Compute #

# data loss using softmax, and make sure that grads[k] holds the gradients #

# for self.params[k]. Don't forget to add L2 regularization! #

# #

# When using batch/layer normalization, you don't need to regularize the scale #

# and shift parameters. #

# #

# NOTE: To ensure that your implementation matches ours and you pass the #

# automated tests, make sure that your L2 regularization includes a factor #

# of 0.5 to simplify the expression for the gradient. #

############################################################################

loss,dout = softmax_loss(scores,y)

loss += 0.5 * self.reg * np.sum(self.params['W%d' %(self.num_layers,)] ** 2)

# 在输出层处梯度的反向传播,顺便把梯度保存在梯度字典 grad 中:

dout,dw,db = affine_backward(dout,out_cache)

grads['W%d' %(self.num_layers,)] = dw + self.reg * self.params['W%d' %(self.num_layers,)]

grads['b%d' %(self.num_layers,)] = db

# 在每一个隐藏层处梯度的反向传播,不仅顺便更新了梯度字典 grad,还迭代算出了损失值loss

for i in range(self.num_layers - 1):

ri = self.num_layers - 2 - i #倒数第ri+1隐藏层

loss += 0.5 * self.reg * np.sum(self.params['W%d' %(ri+1,)] ** 2) #迭代地补上每层的正则项给loss

if self.use_dropout:

dout = dropout_backward(dout,dp_cache[ri])

if self.normalization == 'batchnorm':

dout,dw,db,dgamma,dbeta = affine_bn_relu_backward(dout,fc_mix_cache[ri])

grads['gamma%d' %(ri+1,)] = dgamma

grads['beta%d' %(ri+1,)] = dbeta

else:

dout,dw,db = affine_relu_backward(dout,fc_mix_cache[ri])

grads['W%d' %(ri+1,)] = dw + self.reg * self.params['W%d' %(ri+1,)]

grads['b%d' %(ri+1,)] = db

############################################################################

# END OF YOUR CODE #

############################################################################

return loss, grads

用到的两个结构:

def affine_bn_relu_forward(x, w, b, gamma, beta, bn_param):

affine_out, fc_cache = affine_forward(x, w, b) #线性模型

bn_out, bn_cache = batchnorm_forward(affine_out, gamma, beta, bn_param) #batchnorm

relu_out, relu_cache = relu_forward(bn_out) #relu层

cache = (fc_cache, bn_cache, relu_cache)

return relu_out, cache

def affine_bn_relu_backward(dout, cache):

fc_cache, bn_cache, relu_cache = cache

drelu_out = relu_backward(dout, relu_cache) #relu

dbn_out, dgamma, dbeta = batchnorm_backward(drelu_out, bn_cache) #batchnorm

dx, dw, db = affine_backward(dbn_out, fc_cache) #线性

return dx, dw, db, dgamma, dbeta

Running check with reg = 0

Initial loss: 2.2611955101340957

W1 relative error: 1.10e-04

W2 relative error: 2.85e-06

W3 relative error: 4.05e-10

b1 relative error: 4.44e-08

b2 relative error: 2.22e-08

b3 relative error: 1.01e-10

beta1 relative error: 7.33e-09

beta2 relative error: 1.89e-09

gamma1 relative error: 6.96e-09

gamma2 relative error: 1.96e-09

Running check with reg = 3.14

Initial loss: 6.996533220108303

W1 relative error: 1.98e-06

W2 relative error: 2.28e-06

W3 relative error: 1.11e-08

b1 relative error: 2.78e-09

b2 relative error: 2.22e-08

b3 relative error: 2.10e-10

beta1 relative error: 6.65e-09

beta2 relative error: 4.23e-09

gamma1 relative error: 6.27e-09

gamma2 relative error: 5.28e-09

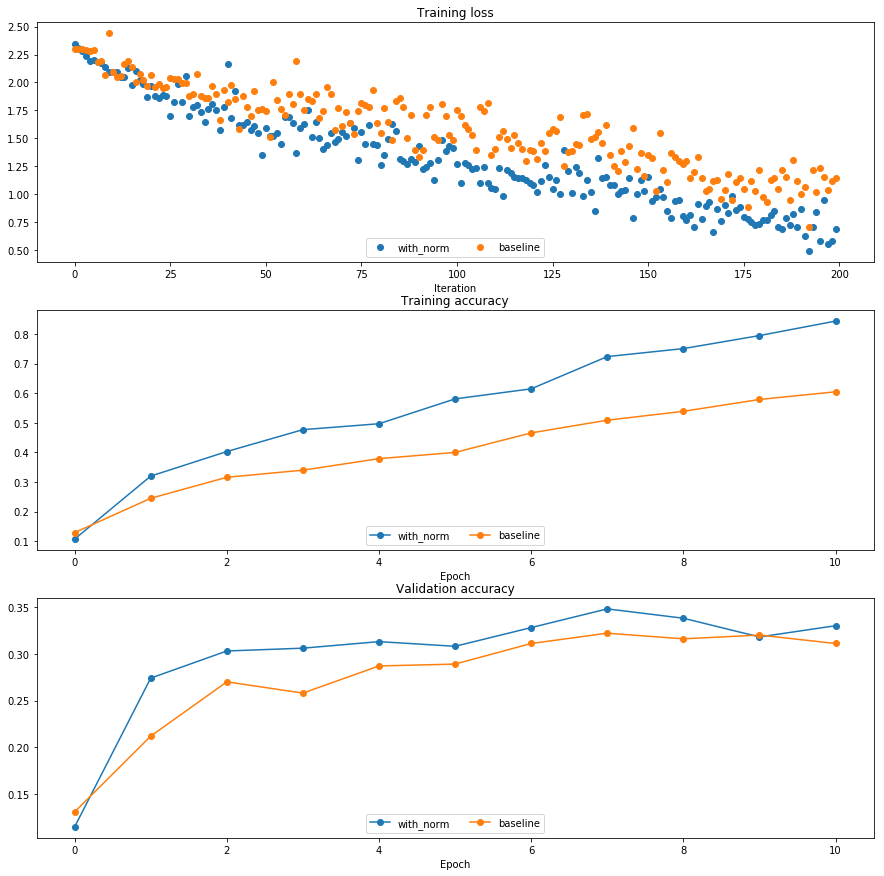

用一个六层的网络,10000个训练样本来检测batchnorm的效果

发现当然是有batchnorm的效果更好

初始化和batchnorm的交互作用

发现有batchnorm的情况下,权重初始化用训练的影响更小,或者说,batchnorm帮助控制了当权重初始化不好的情况,专业点的话,有batchnorm对权重初始化的鲁棒性更高。。

在权重初始化不好的情况下,batchnorm的性能明显优于没有baseline;权重初始化较好的情况下,表现相当。

研究batchnorm和batch size之间的关系:

发现batch越大结果越好(当然了)。batch越大对于平均值和方差的估计越准确,结果越好。所以在硬件支持的情况下,batch越大越好。

Layer Normalization:

LN是针对深度网络的某一层的所有神经元的输入进行normalize操作。LN中同层神经元输入拥有相同的均值和方差,不同的输入样本有不同的均值和方差。LN用于RNN效果比较明显,但是在CNN上,不如BN。

前向传播:

def layernorm_forward(x, gamma, beta, ln_param):

"""

Forward pass for layer normalization.

During both training and test-time, the incoming data is normalized per data-point,

before being scaled by gamma and beta parameters identical to that of batch normalization.

Note that in contrast to batch normalization, the behavior during train and test-time for

layer normalization are identical, and we do not need to keep track of running averages

of any sort.

Input:

- x: Data of shape (N, D)

- gamma: Scale parameter of shape (D,)

- beta: Shift paremeter of shape (D,)

- ln_param: Dictionary with the following keys:

- eps: Constant for numeric stability

Returns a tuple of:

- out: of shape (N, D)

- cache: A tuple of values needed in the backward pass

"""

out, cache = None, None

eps = ln_param.get('eps', 1e-5)

###########################################################################

# TODO: Implement the training-time forward pass for layer norm. #

# Normalize the incoming data, and scale and shift the normalized data #

# using gamma and beta. #

# HINT: this can be done by slightly modifying your training-time #

# implementation of batch normalization, and inserting a line or two of #

# well-placed code. In particular, can you think of any matrix #

# transformations you could perform, that would enable you to copy over #

# the batch norm code and leave it almost unchanged? #

###########################################################################

#layer norm是对输入的数据的每一个样本(1,D),求均值方差等等,不依赖batch。相比batch norm有自己的特点。但仿佛在卷积网络中效果不如batch norm

x_T = x.T

sample_mean = np.mean(x_T,axis = 0)

sample_var = np.var(x_T,axis = 0)

x_norm_T = (x_T - sample_mean) / np.sqrt(sample_var + eps)

x_norm = x_norm_T.T

out = x_norm * gamma + beta

cache = (x,x_norm,gamma,sample_mean,sample_var,eps)

###########################################################################

# END OF YOUR CODE #

###########################################################################

return out, cache

Before layer normalization:

means: [-59.06673243 -47.60782686 -43.31137368 -26.40991744]

stds: [10.07429373 28.39478981 35.28360729 4.01831507]

After layer normalization (gamma=1, beta=0)

means: [ 4.81096644e-16 -7.40148683e-17 2.22044605e-16 -5.92118946e-16]

stds: [0.99999995 0.99999999 1. 0.99999969]

After layer normalization (gamma= [3. 3. 3.] , beta= [5. 5. 5.] )

means: [5. 5. 5. 5.]

stds: [2.99999985 2.99999998 2.99999999 2.99999907]

后向传播:

def layernorm_backward(dout, cache):

"""

Backward pass for layer normalization.

For this implementation, you can heavily rely on the work you've done already

for batch normalization.

Inputs:

- dout: Upstream derivatives, of shape (N, D)

- cache: Variable of intermediates from layernorm_forward.

Returns a tuple of:

- dx: Gradient with respect to inputs x, of shape (N, D)

- dgamma: Gradient with respect to scale parameter gamma, of shape (D,)

- dbeta: Gradient with respect to shift parameter beta, of shape (D,)

"""

dx, dgamma, dbeta = None, None, None

###########################################################################

# TODO: Implement the backward pass for layer norm. #

# #

# HINT: this can be done by slightly modifying your training-time #

# implementation of batch normalization. The hints to the forward pass #

# still apply! #

###########################################################################

x,x_norm,gamma,sample_mean,sample_var,eps = cache

x_T = x.T

dout_T = dout.T

N = x_T.shape[0]

dbeta = np.sum(dout,axis = 0)

dgamma = np.sum(x_norm * dout,axis = 0)

dx_norm = dout_T * gamma[:,np.newaxis]

dv = ((x_T - sample_mean) * -0.5 * (sample_var + eps)** -1.5 * dx_norm).sum(axis = 0)

dm = (dx_norm * -1 * (sample_var + eps) ** -0.5).sum(axis = 0) + (dv * (x_T - sample_mean) * -2 / N).sum(axis = 0)

dx_T = dx_norm / (sample_var + eps)** 0.5 + dv * 2 * (x_T - sample_mean) / N + dm / N

dx = dx_T.T

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx, dgamma, dbeta

dx error: 1.433615657860454e-09

dgamma error: 4.519489546032799e-12

dbeta error: 2.276445013433725e-12

layernorm和batch size之间的关系:

发现与batch的大小没有关系。